Builders Don’t Buy Frameworks Until They Recognize the Failure

Why responsibility drift is a second-order diagnosis.

Paid subscribers see the discipline while it’s still forming — before it’s polished into a framework. You’ll see decision briefs, pattern notes, and method refinements written while situations are still unfolding.

No one is buying my Declare Responsibility Toolkit. I can think of two good reasons.

AI problems often start earlier in the system than people think.

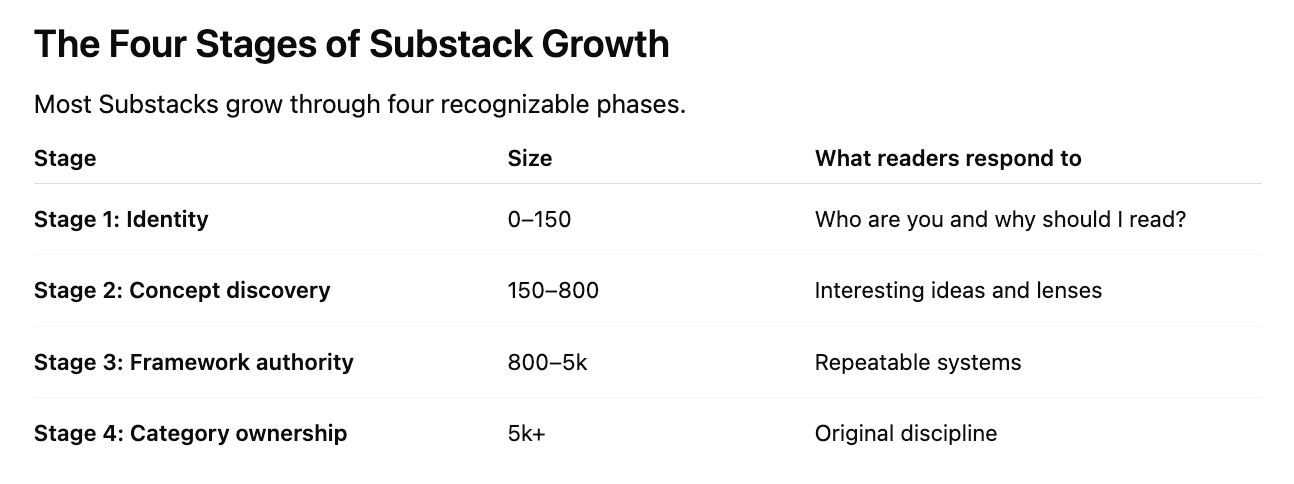

I’m in Stage 2 of Substack growth, which optimizes for discovery

This post captures some strategy notes on both points to help me optimize for concept discovery.

What is the signal people are noticing but can’t explain?

Sometimes, my post ideas come from my own experiments or desires to unpack a concept and see how it works. Recently, I’ve felt the need to write some posts to help people connect more clearly to what my work is meant to solve — no AI system surprises in prod.

Writing those bridge articles felt like the right thing to do, but I felt like I was getting away from my focus. And, I suspected that letting AI help me think about strategy was part of the problem.

I’m still in the phase where I’m updating my profile and publication description weekly as a result of me slowing finding my space on Substack.

Substack Check-In

My publication description still resonates with me:

Design AI systems that stay in their lane—clear job, enforced boundaries, behavior you can explain.

All of my posts come from my own curiosity and discovery as I navigate what I need to know about AI systems. But this work of bridging articles took me off the case and felt like I took a vacation during a project and came back to a few new stories and epics.

Substack Calibration Check-In

Second, I use AI to assess my Substack Stats to help me validate my growth stage:

With ~180 free subscribers, I’m in Stage 2 with interesting ideas and lenses within the sea of AI content that floats around Substack. Honestly, I’ve also been in Stage 1 or Stage 2.5 depending on the AI conversation so let’s not read too much into this.

The main idea is that discovery content works best when readers recognize themselves. Abstract frameworks don’t trigger recognition.

Abstract Pain Doesn’t Need to Be Solved

Right now, I’m interesting, but not useful to most builders because I haven’t effectively matched my solution to their problem.

My Declare Responsibility Toolkit solves this problem:

“Agents drift because responsibility wasn’t clearly declared.”

But most builders don’t think they have that problem yet.

They think their problems are:

hallucinations

prompt quality

model capability

eval metrics

tool calling errors

Drift from unclear responsibility is a second-order diagnosis. People usually only see it after they’ve built agents for a while.

People buy frameworks after they recognize the failure mode.

Help Builders Diagnose the Failure

Right now many readers are still learning what drift is, how agents behave, and how responsibility works. The mental model is developing.

Is my problem actually responsibility drift?

Responsibility Engineering is emerging from observation. The discipline is forming as I study repeated behavior patterns in agent systems.

I’m going to focus on signals that help builders diagnose whether their problem is actually responsibility drift.

Agent Behavior Diagnosis

If your agent is doing any of these, the problem may not be the model. It may be the job definition.

Signal 1 — Unrequested actions

The agent:

makes recommendations that weren’t requested

continues a workflow after completing the assigned step

Signal 2 — Scope expansion

The agent begins to:

interpret context as instruction

solve adjacent problems you didn’t ask it to solve

Signal 3 — Confident wrongness

The agent consistently produces:

coherent reasoning

incorrect assumptions

repeated logical mistakes

This is reliable behavior applied to the wrong job.

Signal 4 — Hard-to-explain behavior

When someone asks:

“Why did the agent do that?”

You can’t trace the behavior back to a clear responsibility definition.

Once someone sees a signal, they usually want a fix. My toolkit can help with that step.

In the next sections, I’ll walk through my Reader’s Mental Model and the opportunities I’m exploring to help people develop this diagnostic skill.